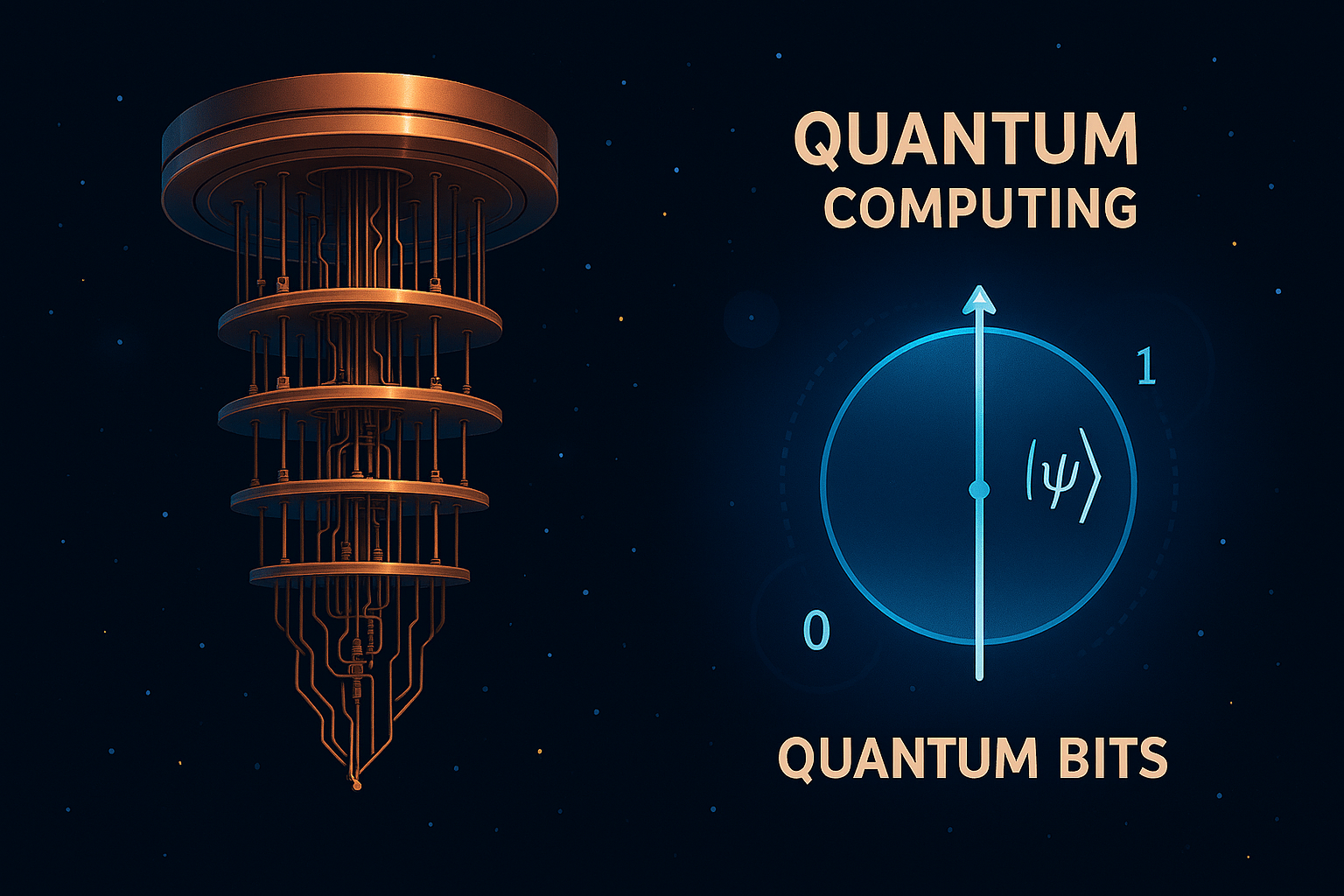

Quantum computing is one of the most exciting frontiers in modern science and technology. Unlike classical computers, which process information using bits (0s and 1s), quantum computers use quantum bits, or qubits, which can exist in multiple states simultaneously. This fundamental shift opens the door to solving problems that are currently intractable for even the most powerful supercomputers.

What Is Quantum Computing?

At its core, quantum computing is based on the principles of quantum mechanics, the branch of physics that describes the behaviour of particles at the atomic and subatomic levels. The key concepts that make quantum computing possible include:

- Superposition: A qubit can be in a state of 0, 1, or both at the same time. This allows quantum computers to process a vast number of possibilities simultaneously.

- Entanglement: Qubits can be entangled, meaning the state of one qubit is directly related to the state of another, no matter how far apart they are. This leads to powerful correlations that classical systems can’t replicate.

- Interference: Quantum algorithms utilize interference to amplify correct paths and cancel out incorrect ones, thereby facilitating the efficient discovery of solutions.

How Does It Differ from Classical Computing?

Classical computers perform calculations sequentially or in parallel using bits. Quantum computers, thanks to superposition and entanglement, can explore many solutions at once. This makes them particularly suited for:

- Cryptography: Breaking complex encryption schemes.

- Optimization: Solving logistical and resource allocation problems.

- Simulation: Modelling quantum systems in chemistry and physics.

- Machine Learning: Enhancing algorithms with quantum speedups.

Theoretical Foundations

Quantum computing theory is built on several mathematical and physical frameworks:

- Linear Algebra: Quantum states are represented as vectors in complex vector spaces.

- Unitary Operations: Quantum gates (like classical logic gates) are reversible and represented by unitary matrices.

- Quantum Algorithms: Famous examples include Shor’s algorithm (for factoring large numbers) and Grover’s algorithm (for searching unsorted databases).

Challenges and Future Directions

Despite its promise, quantum computing faces significant hurdles:

- Decoherence: Quantum states are fragile and can be disrupted by their environment.

- Error Correction: Quantum error correction is complex and requires many physical qubits to represent a single logical qubit.

- Scalability: Building large-scale quantum computers is still a major engineering challenge.

However, ongoing research and investment are rapidly advancing the field. Companies like IBM, Google, and startups worldwide are racing to build practical quantum computers.

Conclusion

Quantum computing is not just a faster version of classical computing—it’s a fundamentally different paradigm. As we continue to explore and refine this technology, we may unlock solutions to problems that were once thought impossible. The quantum revolution is just beginning, and its impact could be as transformative as the invention of the classical computer itself.